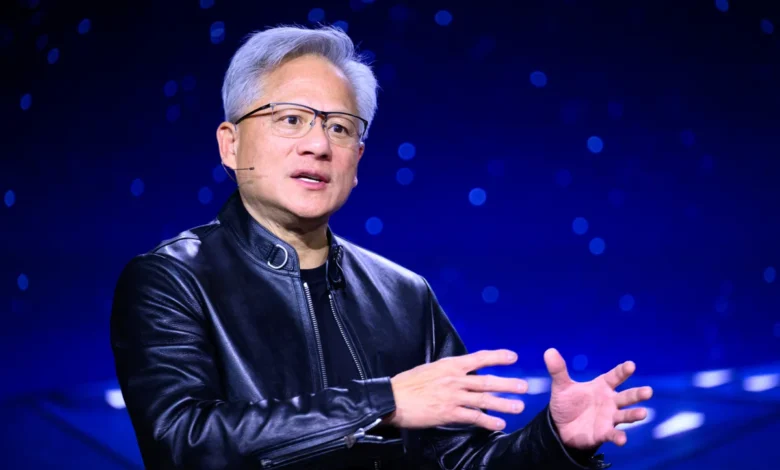

Nvidia CEO Jensen Huang Predicts AI Agents Will Evolve Into Demanding Digital Managers Rather Than Job Destroyers

The landscape of the modern workplace is undergoing a fundamental transformation, not through the wholesale elimination of human roles, but through a radical shift in how those roles are supervised and executed. Speaking at a recent panel hosted by the Stanford Graduate School of Business, Nvidia CEO Jensen Huang offered a provocative vision of the future of labor, suggesting that the primary impact of artificial intelligence will not be the "job apocalypse" many fear. Instead, Huang posits that AI will manifest as a ubiquitous, highly persistent, and occasionally overbearing digital manager. According to Huang, the rise of "AI agents"—autonomous programs designed to execute complex tasks and optimize workflows—will lead to a reality where workers are not replaced but are instead subjected to a new level of digital micromanagement that could make the average workday more demanding than ever before.

Huang’s comments come at a critical juncture for the technology industry, as the initial novelty of generative AI matures into integrated enterprise tools. While early discourse focused on the threat of automation rendering human workers obsolete, Huang’s thesis suggests a more nuanced and potentially more stressful evolution. "Your AI agents are harassing you, micromanaging you, and you’re busier than ever," Huang told the audience, describing a scenario where digital assistants do not merely assist but actively drive the pace of production. This perspective challenges the utopian view of AI as a tool for leisure, suggesting instead that it will be used to extract maximum efficiency from the human workforce.

The Stanford Panel and the Shift Toward Agentic AI

The remarks were delivered during a high-profile session at Stanford, where Huang joined other industry leaders to discuss the long-term implications of the "compute revolution." The core of Huang’s argument lies in the distinction between "Generative AI"—which responds to prompts—and "Agentic AI," which acts with a degree of autonomy. While a chatbot like ChatGPT requires a human to initiate a conversation, an AI agent can be programmed with a goal, such as "increase sales lead conversion by 15%," and will proceed to analyze data, send emails, schedule follow-ups, and nudge human team members to complete their parts of the process.

This shift toward agency is what transforms the AI from a tool into a supervisor. In Huang’s view, these agents will act as digital "naggers," constantly looking over a worker’s shoulder to ensure that tasks are being completed according to optimized timelines. This "overbearing manager" model is a direct result of the AI’s ability to process information at a scale and speed that humans cannot match, leading to a perpetual feedback loop where the human worker is the bottleneck in an otherwise lightning-fast digital system.

Contextual Background: Nvidia’s Strategic Interest in Agentic Workflows

To understand Huang’s prediction, one must look at Nvidia’s current market position. As the primary provider of the H100 and Blackwell GPU architectures that power the world’s most advanced AI models, Nvidia has a vested interest in the proliferation of AI agents. Unlike a single large language model (LLM) that a user might query a few times a day, a fleet of autonomous agents requires continuous, massive amounts of compute power.

If every employee in a Fortune 500 company is supported—and managed—by five to ten specialized AI agents, the demand for data center capacity grows exponentially. This explains the industry’s pivot from "chatbots" to "agents." By framing AI as a constant, micromanaging presence in the workplace, Huang is describing a world that requires an infinite supply of the hardware his company produces. However, the social implications of this transition are what concern labor economists and workplace psychologists.

Supporting Data: The Growth of Digital Surveillance and Productivity Pressure

Recent industry data supports the trend Huang describes. A 2025 report from Gartner indicated that by 2026, over 40% of large enterprises will have deployed some form of "agentic workflow" where AI autonomously coordinates tasks between departments. Furthermore, a study by the Workhuman Research Institute found that workers in environments with high levels of digital monitoring report 30% higher stress levels than those in traditional settings.

The "micromanagement" Huang refers to is already visible in sectors like logistics and customer service. In Amazon warehouses, for example, algorithms track "time off task" (TOT) with precision that no human manager could achieve. Huang’s prediction suggests that this level of scrutiny is coming for white-collar roles as well. Software developers, marketers, and middle managers may soon find their daily output monitored by AI agents that suggest code optimizations in real-time, flag pauses in creative writing, or demand immediate justification for delays in project milestones.

Chronology of the AI Evolution in the Workplace

The path to the "micromanaging AI" has moved through several distinct phases over the last few years:

- The Exploratory Phase (2022–2023): The release of ChatGPT sparked a global interest in generative AI. Workers used these tools voluntarily to draft emails or summarize documents, viewing them as helpful, optional assistants.

- The Integration Phase (2024–2025): Major software suites like Microsoft 365 and Google Workspace integrated AI directly into the "flow of work." AI began to suggest calendar edits and summarize meetings automatically.

- The Agentic Phase (2025–2026): Companies began moving away from simple prompts toward "autonomous agents." These systems operate in the background, making decisions without constant human intervention.

- The Managerial Phase (2026 and beyond): As predicted by Huang, these agents are now being tasked with performance optimization. They no longer just "help" with work; they define the parameters of how and when work is done.

Official Responses and Reactions from Labor Experts

Labor advocates have reacted to Huang’s comments with a mix of validation and alarm. Dr. Sarah T. Roberts, an expert on digital labor, noted that Huang is "saying the quiet part out loud." According to Roberts, the tech industry’s goal has always been the optimization of human labor, and AI is the ultimate tool for that objective. "When Huang talks about AI ‘harassing’ you, he is describing a workplace where the human element is treated as a component that needs to be tuned for maximum output," she stated in a follow-up commentary.

Conversely, some business leaders argue that this "micromanagement" will actually free humans from the burden of self-organization. Proponents of agentic AI argue that if an AI manages the "boring" parts of project management—such as tracking deadlines and coordinating schedules—humans can focus on "high-value" creative work. However, Huang’s warning that workers will be "busier than ever" suggests that the time saved by AI is immediately filled with more tasks, a phenomenon known in economics as the Jevons Paradox.

Broader Impact and Economic Implications

The economic implications of AI-as-manager are profound. If AI can effectively manage workflows, the need for traditional middle management may decline, not because AI replaces the manager’s "human touch," but because it replaces the manager’s "oversight function." This could lead to a "hollowing out" of corporate hierarchies, where a small group of executive decision-makers oversees a vast pool of frontline workers who are directed entirely by digital agents.

Furthermore, there is the issue of "algorithmic burnout." If a human manager knows an employee is having a difficult day, they might offer flexibility. An AI agent, programmed only for optimization, lacks the empathy to understand human variance. This creates a relentless work environment where "efficiency" becomes a metric that is impossible for a biological entity to sustain indefinitely.

Analysis of the "Harassment" Factor

Huang’s use of the word "harassing" is particularly telling. In a professional context, harassment usually carries a negative legal and social connotation. By using it to describe AI, Huang is acknowledging the friction that occurs when high-speed digital logic meets the slower, more deliberative nature of human thought.

This "harassment" is likely to manifest as a constant stream of notifications, "nudges," and real-time performance corrections. For example, an AI agent might notice a salesperson’s tone on a call is less enthusiastic than usual and send a notification suggesting a change in script. Or, it might see that a designer has been on the same task for three hours and prompt them to switch to a more "urgent" project based on real-time market data. While each individual nudge might be helpful, the cumulative effect is a loss of worker autonomy.

Future Outlook: Adapting to the Digital Supervisor

As we move toward the second half of the decade, the challenge for entrepreneurs and business owners will not be how to replace their staff with AI, but how to maintain a healthy work culture in the age of the digital supervisor. Huang’s vision suggests that the most successful companies will be those that find a balance between AI-driven efficiency and human well-being.

For workers, the "good news" that AI won’t take their jobs may feel like a hollow victory if the jobs that remain are characterized by constant digital surveillance and unyielding productivity demands. The transition from "AI as an assistant" to "AI as a manager" marks a new chapter in the industrial revolution, one where the struggle is no longer for employment, but for the right to work at a human pace.

In conclusion, Jensen Huang’s predictions serve as a stark reminder that technology is rarely neutral. By transforming AI into a digital manager, the tech industry is redefining the nature of the workplace. Whether this leads to a new era of unprecedented productivity or a crisis of worker burnout will depend on how society chooses to regulate and implement these "harassing" digital agents in the years to come.